Combining AI AppSec, Autonomous GitHub Agents, And Agentic Security Workflows To Scale Modern Application Security

Table Of Contents

- Introduction

- Why Traditional AppSec Struggles To Scale

- The Rise Of AI AppSec And Autonomous Development

- What Are Autonomous GitHub Agents?

- How GitHub Agents Improve Shift-Left Security

- Automated Security Testing In AI-Native Environments

- Why Security Teams Need More Than Vulnerability Detection

- How Bright Agent And GitHub Agents Work Together

- The Business Benefits Of AI-Powered AppSec

- The Future Of Autonomous Security Operations

- FAQ

- Final Thoughts

Introduction

Software development is changing in a way. Artificial Intelligence is not just helping people who write code. It is actually taking part in the process. AI is looking at the changes people make to the code, creating documents, doing tests automatically, and making everything happen faster from start to finish.

As companies start using AI for coding, the best AI coding assistants and the best AI coding tools are being made really fast. Teams can now make things like APIs, cloud services, automation for infrastructure, and applications that are ready to use in just a few hours instead of weeks.

While this speed is really good, for business, it also creates some problems:

- More code changes

- More security findings

- Faster deployment cycles

- Larger attack surfaces

- Greater AppSec complexity

Traditional security processes were never designed for this level of velocity.

This is why organizations are investing heavily in:

AI AppSec and autonomous security workflows

Modern AppSec teams need security solutions that move at the same speed as developers. Instead of relying on manual reviews and disconnected workflows, organizations are increasingly adopting autonomous GitHub agents and intelligent security agents that continuously support development teams throughout the SDLC.

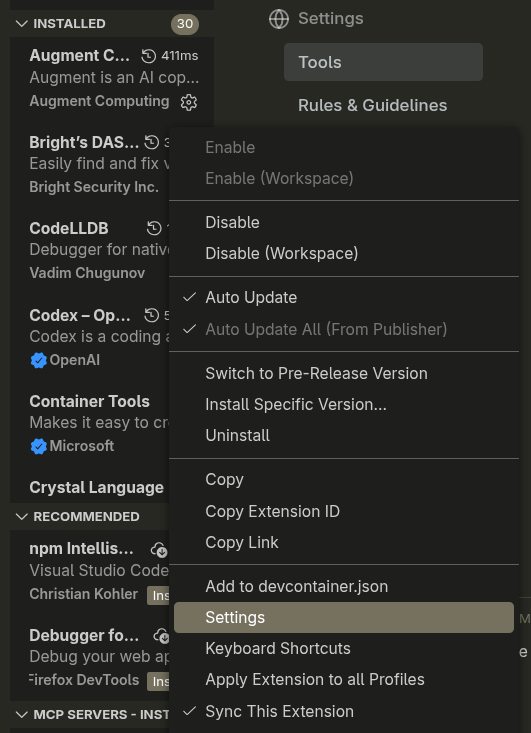

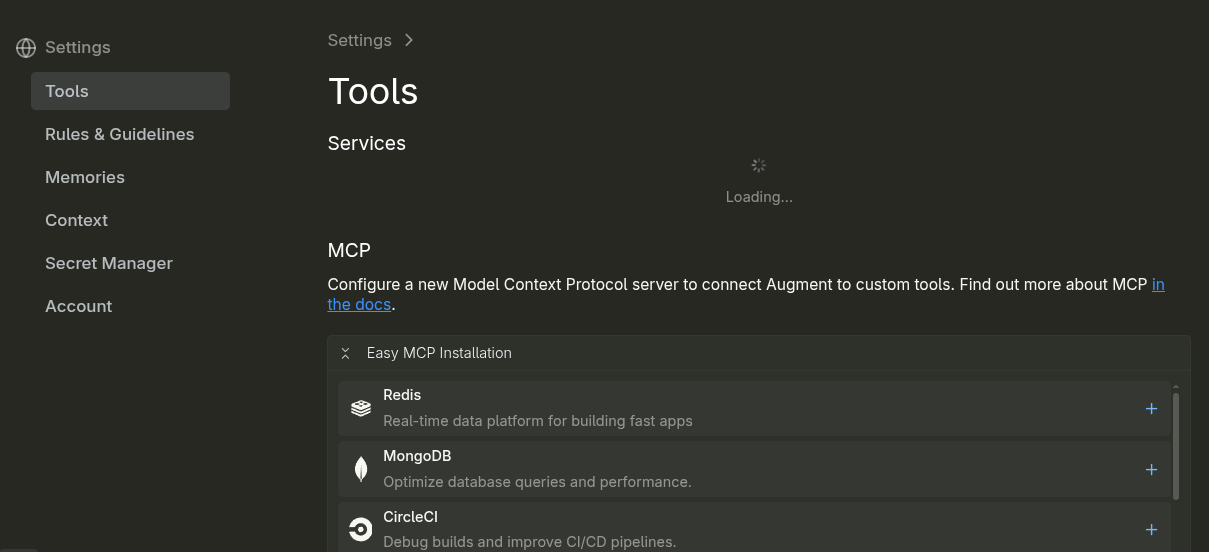

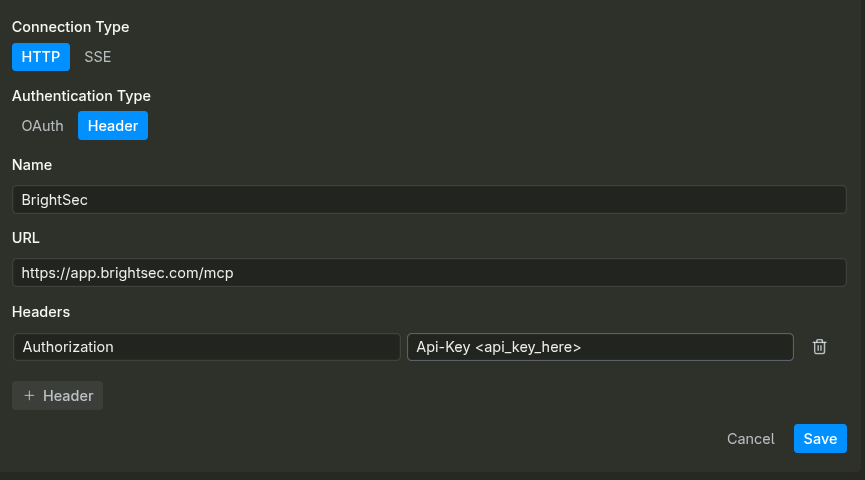

At Bright Security, this vision is powered through:

Bright Agent

An AI-powered AppSec teammate designed to help organizations discover, validate, prioritize, and remediate vulnerabilities without slowing engineering velocity.

Why Traditional AppSec Struggles To Scale

Traditional AppSec programs were built around periodic reviews, manual penetration testing, vulnerability triage meetings, and security gates that occurred after development was largely complete.

That model worked when applications evolved slowly.

Today, engineering teams operate in environments driven by:

- Continuous deployment

- Cloud-native infrastructure

- API-first architectures

- AI-generated development

- Autonomous workflows

The rise of the best ai coding assistant, best ai tool for coding, and best generative ai for coding has dramatically increased software output across organizations.

However, security teams often face:

- Growing vulnerability backlogs

- Alert fatigue

- Resource limitations

- Delayed remediation

- Developer frustration

As application complexity increases, security teams cannot simply hire enough people to keep pace.

Modern AppSec increasingly requires:

Security automation that scales alongside development

Organizations need intelligent systems capable of continuously assisting security operations without creating bottlenecks for engineering teams.

The Rise Of AI AppSec And Autonomous Development

AI is rapidly becoming a foundational component of modern software development.

The growth of:

- Best ai coding assistants

- Best ai coding tools

- Best ai model for coding

- Best ai coding assistant 2026

Has transformed how developers build applications.

Today AI can assist with:

- Code generation

- Infrastructure automation

- Documentation creation

- Test generation

- Workflow orchestration

This dramatically increases productivity but also increases the volume of code entering production environments.

AI-generated applications can introduce:

- Authentication weaknesses

- API security issues

- Logic flaws

- Configuration mistakes

- Dependency risks

This means AppSec must evolve as well.

Modern organizations increasingly focus on:

AI-powered security operations that support AI-powered development

Because security must now operate at machine speed.

What Are Autonomous GitHub Agents?

Autonomous GitHub agents are AI-powered systems capable of interacting directly with repositories, pull requests, workflows, tickets, and development pipelines.

Unlike traditional automation tools, these agents can understand context and take meaningful actions across development workflows.

GitHub agents can help:

- Review pull requests

- Analyze code changes

- Generate documentation

- Trigger workflows

- Identify potential security concerns

Automatically.

This allows engineering organizations to reduce repetitive tasks while improving productivity and software quality.

Modern GitHub agents increasingly function as:

AI teammates embedded directly into development workflows

Helping teams focus on solving problems rather than managing processes.

How GitHub Agents Improve Shift-Left Security

Shift-Left security focuses on identifying risks earlier in the software development lifecycle.

Autonomous GitHub agents strengthen this approach by providing security awareness directly inside development workflows.

Rather than waiting until staging or production, developers can receive security guidance during:

- Code creation

- Pull request reviews

- Build pipelines

- Deployment preparation

This helps organizations:

- Reduce remediation costs

- Improve code quality

- Minimize security debt

- Strengthen developer awareness

The result is a development culture where security becomes a continuous process rather than a final checkpoint.

Modern organizations increasingly understand that:

The earlier vulnerabilities are addressed, the lower the operational cost

And GitHub agents help make that possible at scale.

Automated Security Testing In AI-Native Environments

Modern applications evolve continuously.

As organizations continue using ai for coding and adopting AI-native development workflows, security testing must evolve beyond periodic scans and manual reviews.

Automated security testing allows organizations to:

- Identify issues faster

- Improve development velocity

- Reduce manual effort

- Strengthen application resilience

Without slowing developers down.

This becomes especially important in environments that rely on:

- Continuous deployment

- API-first architectures

- Cloud-native applications

- AI-generated development

Modern AppSec increasingly depends on:

Continuous security validation integrated into development workflows

Because waiting until the end of development is no longer practical.

Why Security Teams Need More Than Vulnerability Detection

Finding vulnerabilities is important.

But discovering issues is only one part of the AppSec challenge.

Many organizations already have security tools that generate alerts. The real challenge is deciding:

- What matters most?

- What should be fixed first?

- Which vulnerabilities are truly exploitable?

- How can remediation happen faster?

This is where many AppSec programs struggle.

Security teams frequently face:

- Thousands of findings

- Limited resources

- Remediation bottlenecks

- Developer fatigue

Modern AppSec teams increasingly need:

Intelligent security operations rather than more security alerts

Because successful security programs are measured by remediation outcomes – not vulnerability counts.

How Bright Agent And GitHub Agents Work Together

Modern AppSec teams are struggling with a simple problem: developers are shipping code faster than security teams can review it.

The rise of the best ai for coding, best ai coding assistants, and GitHub-native AI workflows has dramatically accelerated software delivery. While this improves engineering productivity, it also creates larger attack surfaces and significantly more security findings.

This is where Bright Agent changes the equation.

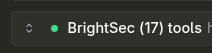

Bright Agent operates as an autonomous AppSec teammate that works alongside developers and GitHub agents throughout the software development lifecycle. Instead of simply identifying vulnerabilities, it helps teams understand risk, prioritize issues, recommend fixes, and drive remediation automatically.

Bright Agent continuously helps organizations:

- Discover security issues

- Validate findings

- Prioritize exploitable risks

- Generate remediation guidance

- Accelerate vulnerability resolution

Unlike traditional security tooling that stops after creating alerts, Bright Agent focuses on helping organizations move from detection to resolution.

One of the biggest advantages of combining autonomous GitHub agents with Bright Agent is the creation of a continuous security feedback loop.

GitHub agents can analyze pull requests, review code changes, and monitor development workflows. Bright Agent extends those capabilities by providing security context, remediation intelligence, and workflow automation directly inside existing engineering processes.

Together, they help organizations:

- Reduce manual security reviews

- Improve remediation speed

- Lower security backlog growth

- Strengthen developer productivity

- Scale AppSec operations efficiently

This allows security teams to spend less time managing alerts and more time improving overall security posture.

As organizations continue adopting AI-native engineering practices, Bright Agent becomes a critical component of modern AppSec programs by bringing autonomous security operations directly into development workflows.

Modern security teams do not need more dashboards or more alerts. They need outcomes.

Bright Agent was built around this philosophy. Bright Agent is a tool that uses intelligence to look at things, automate work, and work with GitHub. It also helps fix problems. Bright Agent helps companies make security better so it does not slow down the delivery of software.

By making developers use many different security tools, Bright Agent gives them the security help they need where they are already working. This makes application security better for teams and easier for developers to use. It works more effectively. Bright Agent is really helpful, for application security.

The Business Benefits Of AI-Powered AppSec

Organizations investing in AI AppSec increasingly see benefits beyond vulnerability detection.

AI-powered AppSec programs help improve:

- Developer productivity

- Security scalability

- Remediation efficiency

- Engineering velocity

- Operational resilience

Modern enterprises adopting the best ai for programming, best ai coder, and best ai coding assistants are discovering that intelligent automation allows security teams to support larger engineering organizations without dramatically increasing headcount.

Strong AI-powered AppSec programs help organizations:

Scale security outcomes without scaling operational complexity

This becomes increasingly important as software ecosystems continue expanding.

The Future Of Autonomous Security Operations

The future of AppSec will increasingly be defined by autonomous systems working alongside developers, security teams, and engineering leaders.

AI-powered agents will continue helping organizations:

- Analyze code

- Prioritize risk

- Recommend fixes

- Automate workflows

- Accelerate remediation

Rather than operating as standalone security tools, future AppSec platforms will function as intelligent security teammates embedded directly into development environments.

Organizations that successfully combine:

- AI AppSec automation

- Autonomous GitHub agents

- Shift-Left security

- Continuous validation

- Developer-centric workflows

Will be able to scale security far more effectively than those relying on traditional security processes alone.

Platforms like Bright Agent are helping organizations move toward this future today.

Because the future of AppSec is not simply about finding vulnerabilities.

It is about:

Continuously discovering, prioritizing, and remediating risk through intelligent autonomous workflows

FAQ

What Is AI AppSec?

AI AppSec uses artificial intelligence to improve vulnerability discovery, remediation workflows, security prioritization, and application security operations across the SDLC.

What Are Autonomous GitHub Agents?

Autonomous GitHub agents are AI-powered systems that interact with repositories, pull requests, workflows, and development pipelines to automate development and security processes.

Why Is Shift-Left Security Important?

Shift-Left security helps organizations identify vulnerabilities earlier in development, reducing remediation costs and improving overall software quality.

What Is Bright Agent?

Bright Agent is an AI-powered AppSec teammate that helps organizations discover, validate, prioritize, and remediate vulnerabilities through autonomous security workflows integrated into modern development environments.

Final Thoughts

Modern software development is accelerating rapidly through AI-powered engineering workflows.

The rise of the best ai for programming, best ai coding assistants, best ai coding tools, and using ai for coding has fundamentally changed how applications are built and delivered.

But faster development also creates:

- Larger attack surfaces

- More vulnerabilities

- Greater operational complexity

- Increased AppSec pressure

Modern organizations increasingly require:

- AI AppSec automation

- Autonomous GitHub agents

- Shift-Left security

- Continuous validation

- Intelligent remediation workflows

GitHub agents that work with their help companies find security problems sooner. The Bright Agent then checks these problems, figures out which ones are most important, and fixes them using workflows.

Together, they make a plan for keeping apps secure that uses intelligence. This plan finds security issues, automatically decides which ones to fix first, and fixes them without needing humans. This helps companies keep their apps secure without slowing down the process of making software.

Because when we make software today:

Security must evolve from a checkpoint into a continuous, autonomous process.