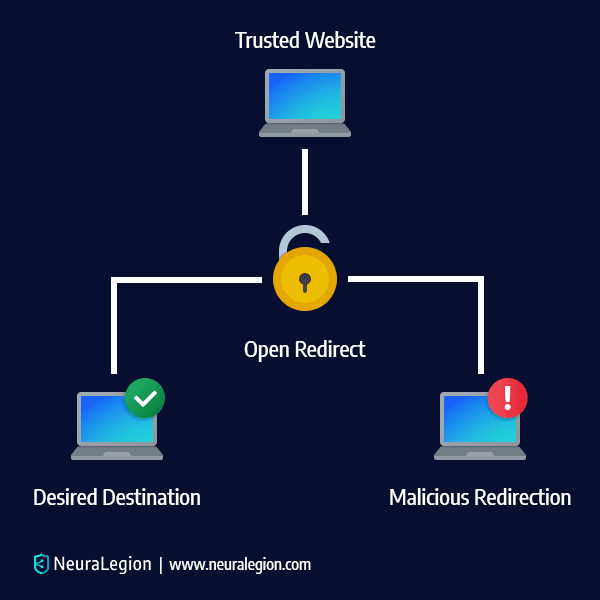

What is an Open Redirect Vulnerability?

An Open Redirect Vulnerability entails an attacker manipulating the user and redirecting them from one site to another site – which may be malicious. The cybersecurity community doesn’t put enough emphasis on Open Redirect Vulnerabilities because it is considered a simple flaw commonly connected to phishing scams and social engineering.

However, Open Redirect Vulnerabilities can help attackers in ways that go far beyond phishing. The true risk of this vulnerability is when it is utilized and combined with Server Side Request Forgery, XSS-Auditor bypass, Oauth Flaw, and so on. We will cover these in-depth later on in this post.

In this article:

- Open Redirect Vulnerability Example

- Impact of Open Redirection Attacks

- Open Redirect in Combination with Other Attacks

- Different kinds of Open Redirects

- Open Redirect Vulnerability Fix

- Detecting and Preventing Open Redirect with Bright

Open Redirect Vulnerability Example

Here is an example of PHP code that obtains a URL from a query string:

$redirect_url = $_GET['url'];

header("Location: " . $redirect_url);

This code obtains the URL from user input, then redirects the victim to another URL. The PHP code after the header() function continues executing. If the user configures the browser to ignore the redirect, it is still possible to access the rest of the page.

This code is vulnerable to attacks as long as no validation is implemented to verify the authenticity of the URL.

For example, threat actors can use this vulnerability to redirect users to malicious sites as part of a phishing campaign. When there is no validation, actors can create a hyperlink to redirect users to malicious sites. Here is how the hyperlink may look like:

http://vulnerablesite.com/vulnerable.php?url=http://malicious.com

Impact of Open Redirection Attacks

Open redirection attacks are most commonly used to support phishing attacks, or redirect users to malicious websites.

Exploiting Open Redirect for Phishing

A user who clicks on a link when browsing a legitimate website, typically does not suspect anything if a login prompt or other form suddenly appears. Threat actors exploit this by sending victims a link to a trusted website, but then exploiting the open redirect vulnerability to redirect to malicious URLs. These URLs are often designed as phishing pages that look trustworthy and similar to the original site.

Once the victim browses the malicious website, they are typically prompted to enter credentials on a login form. The form points to a script, which is controlled by the actor, usually for the purpose of stealing the user credentials typed by the victim.

These stolen credentials are saved by the attacker and later used to impersonate these victims on the website. Since the legitimate website domain is displayed when users click on the link, the probability of a successful phishing attack is very high.

Exploiting Open Redirect to Redirect to Malicious Websites

Threat actors can use this vulnerability to redirect users to websites hosting attacker-controlled content, such as browser exploits or pages executing CSRF attacks. If the website that the link is pointing to is trusted by the victim, the victim is more likely to click on the link. For example, an open redirect on a trustworthy banking website can redirect a victim to a page containing a CSRF exploit designed against a vulnerable WordPress plugin.

Related content: Read our guide to csrf mitigation.

Types of Open Redirects

For the purposes of this blog, we will focus primarily on Open Redirect vulnerabilities that are header and JavaScript-based. Header-based redirects work even when JavaScript isn’t interpreted.

Header-Based Open Redirection

First things first, let’s talk about the Header-based Open Redirection since it works even when a JavaScript doesn’t get interpreted. An HTTP Location header is a response header that does two things:

- It asks a browser to redirect a URL

- It provides information regarding the location of a resource that was recently created.

Basically, it’s JavaScript-independent and attackers utilize this tactic to successfully redirect the targeted user to another website. It’s simple but also incredibly effective if the user doesn’t carefully examine the URL for any strange additions to the code.

JavaScript-Based Open Redirection

Server-side code (server-side functions) takes care of tasks like verifying data and requests and then sending the correct data to the user. While JavaScript-based Open Redirections won’t always work for a server-side function, an unexpecting victim and their web browser are susceptible to exploitation.

When an attacker manages to perform a redirect in JavaScript, many dangerous vulnerabilities are possible. Since Open Redirections are mostly used in phishing scams, people aren’t aware of the fact that an Open Redirection can also be a part of a more complex chain of attacks where multiple vulnerabilities get exploited. And the JavaScript-based Open Redirection is an important part of that chain. For example, redirecting a user to javascript: something() ends up being a dangerous Cross-Site Scripting injection.

Open Redirect in Combination with Other Attacks

As we’ve mentioned before, most people just assume Open Redirects are always tied to phishing scams and social engineering. But they underestimate Open Redirects and how they can be used in conjunction with various attacks.

OAuth Flaw

Let’s discuss how Open Redirect is used to steal users’ confidential information and data. Implementation of an Oauth-flow is best when an attacker wants a victim to sign up or log into any popular platform like Google, Facebook, Twitter, Linkedin, etc. A link will send the victim to the legit website in question (Instagram for example), and then the victim needs to enter their login information. But, then a redirect happens that sends the victim back to a bogus website that’s identical to the real one and the victim is asked to enter their data again, saying the username or the password was incorrect. That’s how your information is stolen, and then the attacker can exploit your information in many ways. Facebook deals with this by requesting a match between redirect_uri and a pre-configured URL, and if there is a mismatch, the redirection is denied. Unfortunately, most other platforms and services don’t do this.

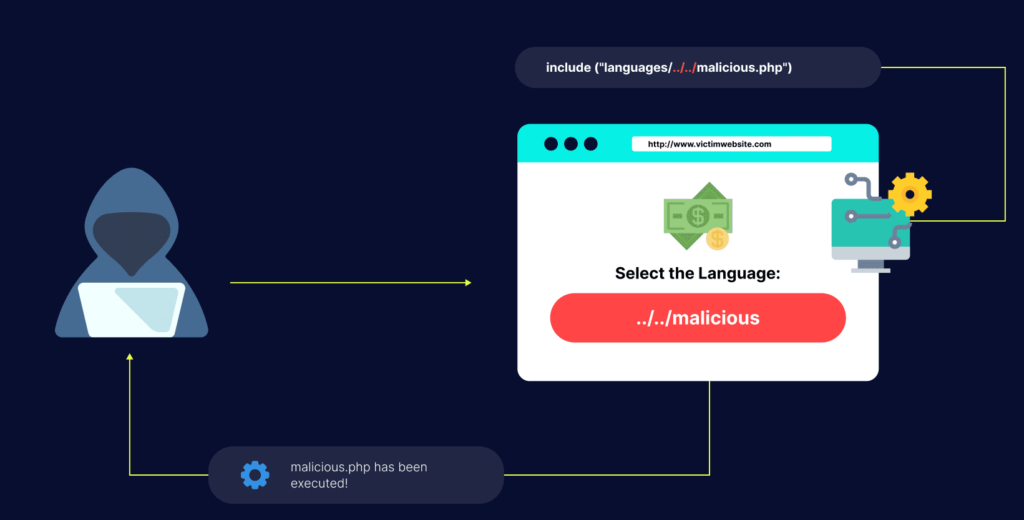

Open Redirect Used for Server Side Request Forgery (SSRF)

In addition, we have Server Side Request Forgery and the Cross-site Scripting Auditor bypass. SSRF is an attack that can compromise a server. Exploiting an SSRF vulnerability makes it easy for a hacker to target the internal systems that hide behind a firewall or filters. Open Redirect is extremely useful when someone needs to bypass these filters. This way the attacker can access content from a domain that can redirect to any number of places. This is how a hacker enters a server and gets free reign to go wherever they please by combining Open Redirect with his SSRF.

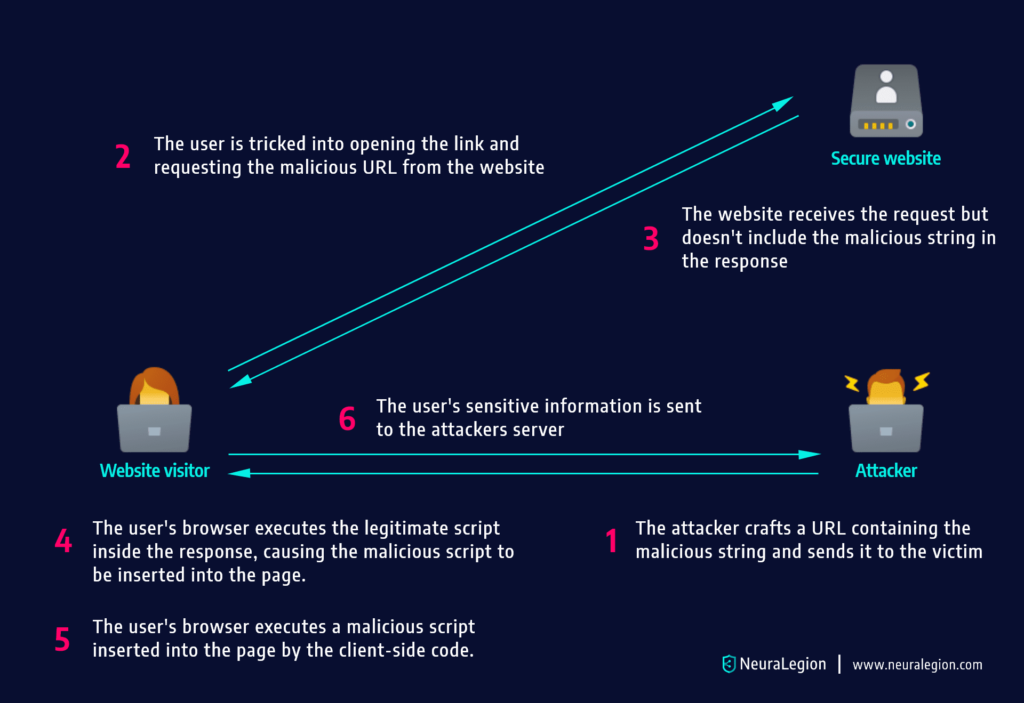

Open Redirect XSS Auditor Bypass

Then there’s the Cross-site Scripting (XSS) Auditor bypass. Google Chrome, for example, has a built-in XSS-auditor which stops most attacks from going through.

But, there’s a way to bypass this by using an Open Redirect. Since this XSS-auditor has no way to stop an attacker from including a bunch of scripts that are hosted at the identical domain. Then the Open Redirect lets you avoid the XSS-auditor with code like:

Open Redirect Vulnerability Fix

There are several ways to avoid open redirect vulnerabilities. Here are key methods recommended by the Open Web Application Security Project (OWASP):

- Do not use forwards and redirects.

- Do not allow URLs as user input for a destination.

- If absolutely necessary to accept a URL from users, ask the users to provide a short name, token, or ID that is mapped server-side to the full target URL. This method provides a high level of protection against attacks that tamper with URLs. However, you must be careful not to introduce an enumeration vulnerability that allows users to cycle through IDs and find all possible redirect targets.

- When user input cannot be avoided, you should make sure that all supplied values are valid, are appropriate for the application, and are authorized for each user.

- Create a list of all trusted URLs, including hosts or a regex, in order to sanitize input. Prefer to use an allow-list approach when creating this list, instead of a block list.

- Force redirects to first go to a page that notify users they are redirected out of the website. The message should clearly display the destination and ask users to click on a link to confirm that they want to move to the new destination.

Detecting and Preventing Open Redirect with Bright

Another excellent way of remedying Open Redirect vulnerability is by utilizing Bright a black-box security testing solution that examines your application, APIs to find vulnerabilities.

Bright is an automatic scanner that finds both standard and major security vulnerabilities on its own, without any human assistance. It is an excellent remedy for Open Redirect vulnerabilities as it can locate them swiftly and send alerts with remediation guidelines to developers, or automatically open tickets in a bug tracking tool.