DevSecOps is a strategic approach that unites development, security, operations, and infrastructure as code (IaaS) in a continuous and automated delivery cycle.

DevSecOps aims to monitor, automate, and implement security during all software lifecycle stages, including the planning, development, building, testing, deployment, operation, and monitoring phases. By implementing security in all steps of the software development process, you reduce the risk of security issues in production, minimize the cost of compliance, and deliver software faster.

DevSecOps means that all employees and team members need to take responsibility for security from the very start. They must also make effective decisions at each of the development lifecycle and implement them without compromising on security.

This is part of an extensive series of guides about cybersecurity.

In this article:

- DevSecOps vs DevOps

- What Is Software Development Life Cycle (SDLC) Security?

- Implementing DevSecOps in the SDLC

- DevSecOps Tools

- DevSecOps Best Practices

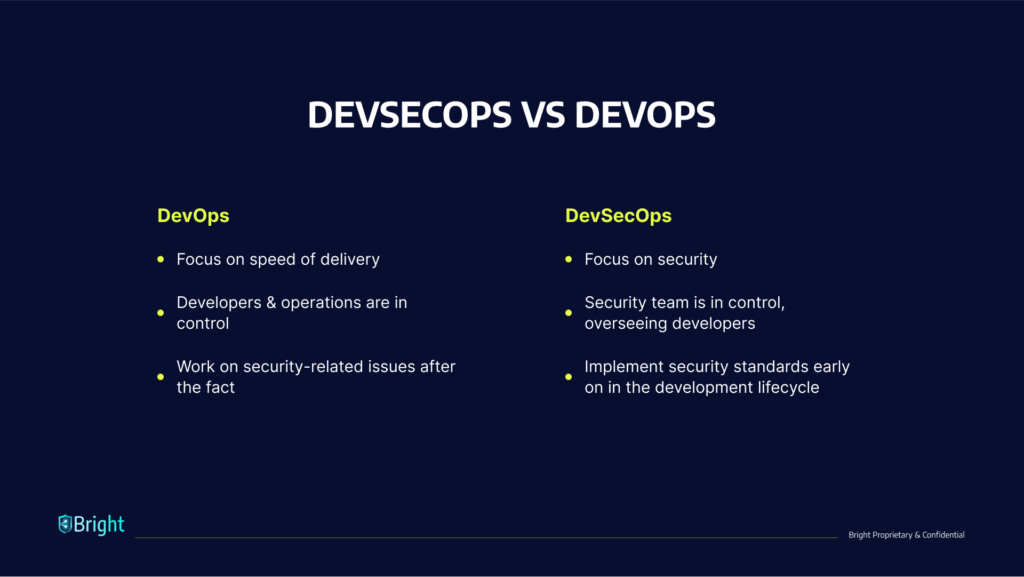

DevSecOps vs DevOps

DevOps fosters collaboration between application teams during the application development and release process. Operations and development teams work in unison to put into practice shared tools and KPIs. The DevOps approach aims to increase the pace of deployments while guaranteeing the efficiency and predictability of the application.

A DevOps engineer focuses on deploying updates to an application as quickly as possible with limited disruption to the user experience. Because they focus on increasing the speed of delivery, DevOps teams do not always regard security threats as a high priority, resulting in the build-up of vulnerabilities that can negatively affect the application, proprietary company assets, and end-user data.

DevSecOps is an extension of DevOps. It arose as development teams started to understand that the DevOps model does not sufficiently address security issues. Rather than retrofitting security into the build, IT and security professionals developed DevSecOps to integrate security management from the onset and during the development process. This way, application security starts at the beginning of the build process rather than at the final stages of the development pipeline.

With this innovative strategy, an engineer of DevSecOps aims to ensure that applications are secure against attacks before they are released to the user and remain secure during application updates. DevSecOps notes that developers should develop code while considering security. In essence, it strives to deal with security issues that DevOps do not oversee.

Shift Left Security

‘Shift left’ is a core component of DevSecOps. It encourages developers to move security from the end (right) to the beginning (left) of the DevOps process. In a DevSecOps environment, the DevSecOps team members integrate security into the development process from the onset.

An organization that adopts DevSecOps involves its engineers and cybersecurity architects as members of its development team. Their role is to ensure the documentation, patching, and secure configuration of all components and configuration items in the stack.

Shifting left lets the DevSecOps team isolate security risks and weaknesses early on. It also makes sure that these security exposure sites are dealt with immediately. In this way, the security team builds the product effectively and implements security as they develop it.

What Is Software Development Life Cycle (SDLC) Security?

A software development life cycle (SDLC) is a structure used to process the creation of an application from the onset to decommission. Over time, many SDLC models have come into existence—from iterative and waterfall to the more current CI/CD and agile models, which increase the frequency and speed of deployment.

Generally speaking, SDLCs feature the following phases:

- Planning and requirements

- Architecture and design

- Test planning

- Coding

- Testing and results

- Release and maintenance

Previously, organizations carried out security-related activities exclusively as a testing component during the last part of the SDLC. Consequently, they wouldn’t discover flaws, bugs, or other vulnerabilities until it was late in the process and more time-consuming and expensive to fix. In some cases, they would miss essential security vulnerabilities altogether.

Research from IBM shows that it costs six times more to address a bug discovered during implementation than fixing one identified during design. In addition, it could be 15 times more costly to fix a bug found during the testing phase than if developers discovered it during design.

Implementing DevSecOps into the SDLC

You should address five key stages to enable DevSecOps in an existing DevOps pipeline.

Secure Local Development

Begin by establishing secure working environments. When you are creating an application, you are writing source code and integrating components together. Docker is beneficial during this phase as it automates the service development and infrastructure on local machines. When using this ready-to-go Docker environment, ensure that you use up-to-date versions of Docker Images and scan all images for vulnerabilities. Even images provided by official sources have vulnerabilities that developers should patch.

Version Control and Security Analysis

When several people are involved with a piece of code, it is more difficult to identify and remediate vulnerabilities. Git systems can be helpful in this respect. If a team member uploads a bit of code, it strongly recommends that you perform automated security testing on your core and code dependencies.

Continuous Integration and Build

When establishing the development package/image, you should ensure that your system or build tool has suitable security in place. It should use HTTPS, should be secure and properly hardened. Preferably, the build system should not be accessible through the Internet.

Promotion and Deployment

When you deploy your application to an environment, insert environment variables and credentials via your CI/CD tool and aim to manage them as secrets. You should effectively manage and encrypt these secrets to ensure they are secure.

Infrastructure Security

When you deploy your application, ensure that you implement a firewall and Intrusion Detection System (IDS) on all container hosts. Security teams must watch logs and alerts from these tools and rapidly respond to them.

DevSecOps Tools

Here are a few categories of DevSecOps tools you can use to implement a DevSecOps process.

Dynamic Application Security Testing (DAST)

Developers use DAST tools to analyze web applications while running and discover any security weaknesses or vulnerabilities. DAST examines an application and attacks it as a cybercriminal would. DAST tools offer valuable information to developers about the behavior of the application. Developers can use this information to identify where a cybercriminal could stage an attack and work to eliminate the threat.

Bright is from ground-up built for developers and can easily be integrated into your DevOps pipelines. With Bright, you can create end-to-end deployment pipelines in minutes and have security testing as an integral part, without slowing you down or causing too much noise, and resulting in secure products being deployed with a streamlined DevSecOps process in place.

Static Application Security Testing (SAST)

SAST tools can help organizations identify vulnerabilities in their proprietary code. Developers should know about and use SAST tools as an automated component of their development process, which will help them identify and remediate security weaknesses early in the DevOps process. Common static analysis tools include Veracode and SonarQube.

Software Composition Analysis (SCA)

SCA includes monitoring and managing license compliance and security vulnerabilities in the open-source elements that support your code. It helps you understand what open source components are in use, what their dependencies are, and what open source licenses they use.

Advanced SCA tools have policy enforcement abilities – they can prevent the downloads of malicious binaries, fail a build if open source components have vulnerabilities or license issues, and alert security teams. Examples of SCA tools are Whitesource, GitLab, and JFrog X-Ray.

Container Runtime Security

Container runtime security tools examine containers within their runtime environment. These tools can add a firewall to protect container hosts, prevent unauthorized network communication between containers, discover anomalies according to behavioral analytics, and more. Examples of runtime protection tools are Aqua Security, Rezilion, and NeuVector.

Learn more in our detailed guide to devops testing.

6 DevSecOps Best Practices

Here are a few best practices you can use to practice DevSecOps more effectively.

Related content: Read our guide to devsecops vs devops.

Automate Tools and Processes

Automation is essential when finding a middle ground between security, speed, and scale. DevOps already emphasized automation; the same is true for DevSecOps. Automating security processes and tools ensures that teams adhere to DevSecOps best practices.

Automation ensures that developers and security professionals use the tools and processes in a repeatable, reliable, and consistent way. It is essential to know which security processes and activities may be entirely automated and which methods need a degree of manual intervention.

An effective automation strategy is also reliant on the technology and tools in use. One of the things to consider in automation is if a tool has enough interface to facilitate its integration with different subsystems.

Invest In Security Education

Security is the coming together of compliance and engineering. Organizations should promote teamwork between the development engineers, compliance teams, and operations teams to ensure that all employees appreciate the organization’s security posture and adhere to the same standards.

Everyone who contributes to the delivery process must be aware of the fundamental principles of application security. They should also know about application security testing, the Open Web Application Security Project (OWASP) Top 10, and additional secure coding practices.

Developers must understand compliance checks, threat models, and have a working understanding of how to assess risks, exposure and establish security measures.

Promote a Security Culture

Effective leadership promotes a good culture which leads to change within the organization. It is essential in DevSecOps to relay the responsibilities of product ownership and security of processes. Once this occurs, engineers and developers can take responsibility for their tasks and own the process.

DevSecOps operations teams must develop a system that suits them and use the protocols and technologies that serve their current project and team. By empowering the team to create the workflow environment that meets their needs, they become invested in its outcome.

Learn more in our detailed guide to cloud native security.

Recruit Security Champions

A security champion is someone who has both a motivational and an educational role. They encourage and engage with all employees helping them learn, use, and stay committed to security practices. These individuals need not be accomplished security professionals. They should have enough knowledge to answer fundamental questions and bridge the gap between information security specialists and other employees.

In large organizations, particularly those with several offices, security champions are the ones who make sure that employees communicate up-to-date security information throughout their departments. Furthermore, security champions can assist with real-world security simulations and training.

If an actual breach or attack occurs, the security champions will play an essential role in mitigating damage. Generally, a critical factor in effective phishing scams is the delay in reporting the incident, often out of fear of repercussions or embarrassment. Thus, a security champion must be someone that people feel comfortable approaching when real life security issues occur.

Treat Security Vulnerabilities as Software Defects

Organizations generally report security vulnerabilities differently than functional and quality defects, and save the findings in different systems. Teams thus have less visibility into the overall security posture of their tasks.

Retaining quality and security in one location helps teams approach both kinds of issues with the same degree of importance. Security alerts, especially those from automated scanning tools, might include false positives. It can be complex to ask developers to examine and attend to those issues.

One way to address this problem is to fine-tune the security tooling over time by studying historical discoveries and application data. You can also apply custom rulesets and filters so that the tool only reports on critical issues.

Achieve Traceability, Auditability, and Visibility

Implementing auditability, visibility, and traceability in a DevSecOps process can foster a deeper understanding and a safer environment:

- Traceability—lets you monitor configuration items during the development cycle to where developers introduce requirements into the code. This approach can help strengthen your organization’s control framework, as it helps maintain compliance, minimize bugs, ensure security code during application development, and assist with code maintainability.

- Auditability—essential for maintaining compliance with security controls. Procedural, administrative, and technical security controls must be well-documented and auditable. Also, all team members should uphold security control measures.

- Visibility—means that the organization has implemented a monitoring system that oversees operations, sends alerts, and improves awareness of cyberattacks and changes as they take place. It should also provide accountability throughout the entire project lifecycle.

See Additional Guides on Key Cybersecurity Topics

Together with our content partners, we have authored in-depth guides on several other topics that can also be useful as you explore the world of cybersecurity.

Device42

Authored by Faddom

- Device42: 5 Key Features, Limitations, and Alternatives

- Device42 Pricing: The 5 Pricing Tiers Explained

- Device42 CMDB: Pros, Cons, and a Quick Tutorial

API Security

Authored by Radware

- API Security: REST vs. SOAP vs. GraphQL & 8 Best Practices

- Server-Side Request Forgery: Impact, Examples & Defenses

- Insufficient Logging and Monitoring

DAST

Authored by Bright Security